How To Set Up An Appendix

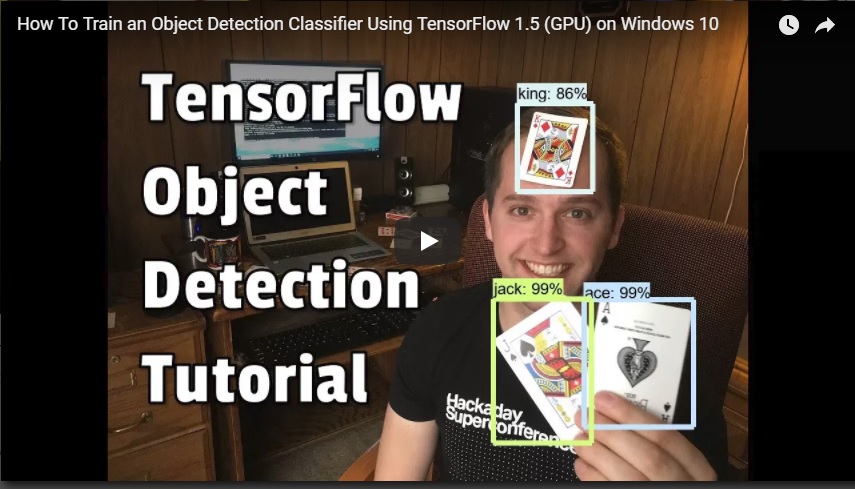

How To Railroad train an Object Detection Classifier for Multiple Objects Using TensorFlow (GPU) on Windows 10

Cursory Summary

Last updated: vi/22/2019 with TensorFlow v1.13.1

This repository is a tutorial for how to use TensorFlow's Object Detection API to train an object detection classifier for multiple objects on Windows 10, eight, or 7. (It will also work on Linux-based OSes with some pocket-sized changes.) It was originally written using TensorFlow version 1.five, just will also piece of work for newer versions of TensorFlow.

Translated versions of this guide are listed below. If y'all would like to contribute a translation in another linguistic communication, please feel free! You lot can add information technology equally a pull request and I will merge it when I become the chance.

- Korean translation (thank you @cocopambag!)

- Chinese translation (thanks @Marco-Ray!)

- Vietnamese translation (thanks @winter2897!)

I besides fabricated a YouTube video that walks through this tutorial. Any discrepancies between the video and this written tutorial are due to updates required for using newer versions of TensorFlow.

If there are differences between this written tutorial and the video, follow the written tutorial!

This readme describes every step required to get going with your ain object detection classifier:

- Installing Anaconda, CUDA, and cuDNN

- Setting upwardly the Object Detection directory structure and Anaconda Virtual Environment

- Gathering and labeling pictures

- Generating training data

- Creating a label map and configuring grooming

- Training

- Exporting the inference graph

- Testing and using your newly trained object detection classifier

Appendix: Mutual Errors

The repository provides all the files needed to train a "Pinochle Deck" playing bill of fare detector that can accurately detect nines, tens, jacks, queens, kings, and aces. The tutorial describes how to supervene upon these files with your own files to railroad train a detection classifier for whatever your heart desires. It too has Python scripts to test your classifier out on an epitome, video, or webcam feed.

Introduction

The purpose of this tutorial is to explicate how to train your own convolutional neural network object detection classifier for multiple objects, starting from scratch. At the cease of this tutorial, you will have a programme that tin identify and draw boxes around specific objects in pictures, videos, or in a webcam feed.

At that place are several practiced tutorials available for how to employ TensorFlow's Object Detection API to train a classifier for a single object. Nevertheless, these usually assume yous are using a Linux operating system. If you're similar me, you might be a piffling hesitant to install Linux on your high-powered gaming PC that has the sweetness graphics card you're using to railroad train a classifier. The Object Detection API seems to accept been developed on a Linux-based Os. To gear up TensorFlow to train a model on Windows, there are several workarounds that need to exist used in place of commands that would work fine on Linux. Besides, this tutorial provides instructions for training a classifier that can detect multiple objects, not just ane.

The tutorial is written for Windows ten, and it will also piece of work for Windows 7 and viii. The full general procedure can also be used for Linux operating systems, only file paths and package installation commands volition demand to change accordingly. I used TensorFlow-GPU v1.five while writing the initial version of this tutorial, merely it will likely work for futurity versions of TensorFlow.

TensorFlow-GPU allows your PC to use the video card to provide actress processing power while training, then it volition be used for this tutorial. In my feel, using TensorFlow-GPU instead of regular TensorFlow reduces training time by a gene of about 8 (3 hours to railroad train instead of 24 hours). The CPU-only version of TensorFlow can likewise be used for this tutorial, simply information technology will take longer. If you use CPU-only TensorFlow, you lot do not need to install CUDA and cuDNN in Pace 1.

Steps

1. Install Anaconda, CUDA, and cuDNN

Anaconda is a software toolkit that creates virtual Python environments so y'all tin install and use Python libraries without worrying about creating version conflicts with existing installations. Anaconda works well on Windows, and enables you to use many Python libraries that unremarkably would only work on a Linux organization. It provides a elementary method for installing TensorFlow (which nosotros'll do in Step 2d). It also automatically installs the CUDA and cuDNN versions you need for using TensorFlow on a GPU.

Download Anaconda for Windows from their webpage (you have to coil down a ways to become to the download links). In one case information technology'due south downloaded, execute the installer file and work through the installation steps.

If you lot are using a version of TensorFlow older than TF v1.13, make certain you lot use the CUDA and cuDNN versions that are compatible with the TensorFlow version yous are using. Here is a table showing which version of TensorFlow requires which versions of CUDA and cuDNN. Anaconda will automatically install the correct version of CUDA and cuDNN for the version of TensorFlow you are using, so you shouldn't have to worry about this.

2. Ready upward TensorFlow Directory and Anaconda Virtual Environment

The TensorFlow Object Detection API requires using the specific directory construction provided in its GitHub repository. It also requires several additional Python packages, specific additions to the PATH and PYTHONPATH variables, and a few extra setup commands to get everything set up to run or railroad train an object detection model.

This portion of the tutorial goes over the full gear up up required. It is fairly meticulous, but follow the instructions closely, considering improper setup can cause unwieldy errors down the road.

2a. Download TensorFlow Object Detection API repository from GitHub

Create a folder directly in C: and proper name it "tensorflow1". This working directory will incorporate the full TensorFlow object detection framework, besides as your training images, training data, trained classifier, configuration files, and everything else needed for the object detection classifier.

Download the total TensorFlow object detection repository located at https://github.com/tensorflow/models by clicking the "Clone or Download" push button and downloading the aught file. Open up the downloaded zippo file and extract the "models-chief" binder direct into the C:\tensorflow1 directory you just created. Rename "models-principal" to just "models".

Note: The TensorFlow models repository'southward code (which contains the object detection API) is continuously updated past the developers. Sometimes they make changes that break functionality with quondam versions of TensorFlow. It is always best to apply the latest version of TensorFlow and download the latest models repository. If you are not using the latest version, clone or download the commit for the version you lot are using as listed in the tabular array below.

If you are using an older version of TensorFlow, here is a tabular array showing which GitHub commit of the repository you should utilize. I generated this by going to the release branches for the models repository and getting the commit before the last commit for the co-operative. (They remove the enquiry folder as the terminal commit earlier they create the official version release.)

| TensorFlow version | GitHub Models Repository Commit |

|---|---|

| TF v1.seven | https://github.com/tensorflow/models/tree/adfd5a3aca41638aa9fb297c5095f33d64446d8f |

| TF v1.viii | https://github.com/tensorflow/models/tree/abd504235f3c2eed891571d62f0a424e54a2dabc |

| TF v1.9 | https://github.com/tensorflow/models/tree/d530ac540b0103caa194b4824af353f1b073553b |

| TF v1.10 | https://github.com/tensorflow/models/tree/b07b494e3514553633b132178b4c448f994d59df |

| TF v1.11 | https://github.com/tensorflow/models/tree/23b5b4227dfa1b23d7c21f0dfaf0951b16671f43 |

| TF v1.12 | https://github.com/tensorflow/models/tree/r1.12.0 |

| TF v1.xiii | https://github.com/tensorflow/models/tree/r1.13.0 |

| Latest version | https://github.com/tensorflow/models |

This tutorial was originally washed using TensorFlow v1.5 and this GitHub commit of the TensorFlow Object Detection API. If portions of this tutorial do non work, information technology may be necessary to install TensorFlow v1.5 and use this verbal commit rather than the most upwardly-to-date version.

2b. Download the Faster-RCNN-Inception-V2-COCO model from TensorFlow's model

TensorFlow provides several object detection models (pre-trained classifiers with specific neural network architectures) in its model zoo. Some models (such as the SSD-MobileNet model) have an architecture that allows for faster detection simply with less accuracy, while some models (such equally the Faster-RCNN model) give slower detection simply with more accuracy. I initially started with the SSD-MobileNet-V1 model, but information technology didn't do a very good chore identifying the cards in my images. I re-trained my detector on the Faster-RCNN-Inception-V2 model, and the detection worked considerably ameliorate, but with a noticeably slower speed.

You tin can choose which model to train your objection detection classifier on. If y'all are planning on using the object detector on a device with depression computational power (such every bit a smart telephone or Raspberry Pi), use the SDD-MobileNet model. If you lot will be running your detector on a decently powered laptop or desktop PC, use one of the RCNN models.

This tutorial will use the Faster-RCNN-Inception-V2 model. Download the model here. Open up the downloaded faster_rcnn_inception_v2_coco_2018_01_28.tar.gz file with a file archiver such equally WinZip or seven-Goose egg and extract the faster_rcnn_inception_v2_coco_2018_01_28 folder to the C:\tensorflow1\models\research\object_detection binder. (Annotation: The model date and version will likely alter in the future, but it should still work with this tutorial.)

2c. Download this tutorial's repository from GitHub

Download the full repository located on this folio (curl to the height and click Clone or Download) and extract all the contents directly into the C:\tensorflow1\models\research\object_detection directory. (You tin can overwrite the existing "README.medico" file.) This establishes a specific directory structure that will be used for the residuum of the tutorial.

At this point, hither is what your \object_detection binder should look like:

This repository contains the images, annotation data, .csv files, and TFRecords needed to train a "Pinochle Deck" playing card detector. You can use these images and data to practice making your own Pinochle Card Detector. It also contains Python scripts that are used to generate the preparation data. It has scripts to test out the object detection classifier on images, videos, or a webcam feed. You can ignore the \doctor folder and its files; they are just there to hold the images used for this readme.

If you want to do preparation your own "Pinochle Deck" card detector, you can leave all the files as they are. You can follow along with this tutorial to come across how each of the files were generated, and then run the grooming. You will still need to generate the TFRecord files (train.record and examination.record) as described in Step 4.

Y'all can also download the frozen inference graph for my trained Pinochle Deck card detector from this Dropbox link and extract the contents to \object_detection\inference_graph. This inference graph will work "out of the box". You can test it after all the setup instructions in Step 2a - 2f take been completed by running the Object_detection_image.py (or video or webcam) script.

If you want to train your own object detector, delete the following files (do not delete the folders):

- All files in \object_detection\images\train and \object_detection\images\examination

- The "test_labels.csv" and "train_labels.csv" files in \object_detection\images

- All files in \object_detection\training

- All files in \object_detection\inference_graph

Now, you are ready to start from scratch in training your ain object detector. This tutorial will assume that all the files listed in a higher place were deleted, and will go on to explain how to generate the files for your own preparation dataset.

2d. Ready new Anaconda virtual surroundings

Adjacent, we'll piece of work on setting upward a virtual environment in Anaconda for tensorflow-gpu. From the Outset menu in Windows, search for the Anaconda Prompt utility, right click on information technology, and click "Run as Ambassador". If Windows asks you if y'all would like to allow it to make changes to your computer, click Yes.

In the command terminal that pops up, create a new virtual surround called "tensorflow1" past issuing the following command:

C:\> conda create -due north tensorflow1 pip python=iii.5 Then, actuate the environment and update pip by issuing:

C:\> actuate tensorflow1 (tensorflow1) C:\>python -one thousand pip install --upgrade pip Install tensorflow-gpu in this environment by issuing:

(tensorflow1) C:\> pip install --ignore-installed --upgrade tensorflow-gpu Since we're using Anaconda, installing tensorflow-gpu volition also automatically download and install the correct versions of CUDA and cuDNN.

(Note: Y'all can also use the CPU-only version of TensorFow, but it will run much slower. If you want to use the CPU-merely version, just use "tensorflow" instead of "tensorflow-gpu" in the previous command.)

Install the other necessary packages by issuing the following commands:

(tensorflow1) C:\> conda install -c anaconda protobuf (tensorflow1) C:\> pip install pillow (tensorflow1) C:\> pip install lxml (tensorflow1) C:\> pip install Cython (tensorflow1) C:\> pip install contextlib2 (tensorflow1) C:\> pip install jupyter (tensorflow1) C:\> pip install matplotlib (tensorflow1) C:\> pip install pandas (tensorflow1) C:\> pip install opencv-python (Annotation: The 'pandas' and 'opencv-python' packages are non needed past TensorFlow, but they are used in the Python scripts to generate TFRecords and to work with images, videos, and webcam feeds.)

2e. Configure PYTHONPATH environs variable

A PYTHONPATH variable must be created that points to the \models, \models\research, and \models\research\slim directories. Do this by issuing the following commands (from any directory):

(tensorflow1) C:\> fix PYTHONPATH=C:\tensorflow1\models;C:\tensorflow1\models\inquiry;C:\tensorflow1\models\inquiry\slim (Note: Every fourth dimension the "tensorflow1" virtual environment is exited, the PYTHONPATH variable is reset and needs to exist ready once again. Y'all can employ "repeat %PYTHONPATH% to come across if it has been set up or non.)

2f. Compile Protobufs and run setup.py

Adjacent, compile the Protobuf files, which are used by TensorFlow to configure model and grooming parameters. Unfortunately, the short protoc compilation command posted on TensorFlow'due south Object Detection API installation page does not work on Windows. Every .proto file in the \object_detection\protos directory must be called out individually by the command.

In the Anaconda Control Prompt, change directories to the \models\enquiry directory:

(tensorflow1) C:\> cd C:\tensorflow1\models\research Then copy and paste the following command into the command line and press Enter:

protoc --python_out=. .\object_detection\protos\anchor_generator.proto .\object_detection\protos\argmax_matcher.proto .\object_detection\protos\bipartite_matcher.proto .\object_detection\protos\box_coder.proto .\object_detection\protos\box_predictor.proto .\object_detection\protos\eval.proto .\object_detection\protos\faster_rcnn.proto .\object_detection\protos\faster_rcnn_box_coder.proto .\object_detection\protos\grid_anchor_generator.proto .\object_detection\protos\hyperparams.proto .\object_detection\protos\image_resizer.proto .\object_detection\protos\input_reader.proto .\object_detection\protos\losses.proto .\object_detection\protos\matcher.proto .\object_detection\protos\mean_stddev_box_coder.proto .\object_detection\protos\model.proto .\object_detection\protos\optimizer.proto .\object_detection\protos\pipeline.proto .\object_detection\protos\post_processing.proto .\object_detection\protos\preprocessor.proto .\object_detection\protos\region_similarity_calculator.proto .\object_detection\protos\square_box_coder.proto .\object_detection\protos\ssd.proto .\object_detection\protos\ssd_anchor_generator.proto .\object_detection\protos\string_int_label_map.proto .\object_detection\protos\train.proto .\object_detection\protos\keypoint_box_coder.proto .\object_detection\protos\multiscale_anchor_generator.proto .\object_detection\protos\graph_rewriter.proto .\object_detection\protos\calibration.proto .\object_detection\protos\flexible_grid_anchor_generator.proto This creates a name_pb2.py file from every proper noun.proto file in the \object_detection\protos folder.

(Note: TensorFlow occassionally adds new .proto files to the \protos folder. If you get an fault saying ImportError: cannot import name 'something_something_pb2' , you may need to update the protoc control to include the new .proto files.)

Finally, run the post-obit commands from the C:\tensorflow1\models\enquiry directory:

(tensorflow1) C:\tensorflow1\models\research> python setup.py build (tensorflow1) C:\tensorflow1\models\research> python setup.py install 2g. Examination TensorFlow setup to verify information technology works

The TensorFlow Object Detection API is now all set upward to use pre-trained models for object detection, or to train a new one. You can test it out and verify your installation is working by launching the object_detection_tutorial.ipynb script with Jupyter. From the \object_detection directory, outcome this command:

(tensorflow1) C:\tensorflow1\models\research\object_detection> jupyter notebook object_detection_tutorial.ipynb This opens the script in your default web browser and allows you to step through the code 1 department at a time. You can step through each section by clicking the "Run" button in the upper toolbar. The department is done running when the "In [ * ]" text side by side to the section populates with a number (due east.one thousand. "In [1]").

(Note: function of the script downloads the ssd_mobilenet_v1 model from GitHub, which is almost 74MB. This means it will have some time to consummate the section, and then be patient.)

Once you have stepped all the way through the script, y'all should meet two labeled images at the bottom department the folio. If yous see this, then everything is working properly! If not, the lesser section will study any errors encountered. Run across the Appendix for a list of errors I encountered while setting this up.

Note: If you run the full Jupyter Notebook without getting whatever errors, but the labeled pictures still don't announced, try this: go in to object_detection/utils/visualization_utils.py and comment out the import statements effectually lines 29 and 30 that include matplotlib. Then, attempt re-running the Jupyter notebook.

iii. Assemble and Characterization Pictures

Now that the TensorFlow Object Detection API is all ready and set to go, we need to provide the images it will employ to train a new detection classifier.

3a. Assemble Pictures

TensorFlow needs hundreds of images of an object to railroad train a good detection classifier. To train a robust classifier, the preparation images should have random objects in the image along with the desired objects, and should take a multifariousness of backgrounds and lighting conditions. There should exist some images where the desired object is partially obscured, overlapped with something else, or only halfway in the picture.

For my Pinochle Menu Detection classifier, I accept 6 different objects I want to detect (the carte ranks nine, x, jack, queen, male monarch, and ace – I am non trying to detect adjust, just rank). I used my iPhone to take about 40 pictures of each card on its ain, with various other non-desired objects in the pictures. And so, I took about another 100 pictures with multiple cards in the pic. I know I want to exist able to find the cards when they're overlapping, then I made sure to accept the cards be overlapped in many images.

You can use your phone to take pictures of the objects or download images of the objects from Google Prototype Search. I recommend having at to the lowest degree 200 pictures overall. I used 311 pictures to railroad train my card detector.

Make certain the images aren't too big. They should be less than 200KB each, and their resolution shouldn't exist more than 720x1280. The larger the images are, the longer it will accept to train the classifier. Yous can use the resizer.py script in this repository to reduce the size of the images.

After you take all the pictures you need, move 20% of them to the \object_detection\images\test directory, and eighty% of them to the \object_detection\images\train directory. Brand sure in that location are a multifariousness of pictures in both the \test and \train directories.

3b. Label Pictures

Here comes the fun part! With all the pictures gathered, it'southward time to label the desired objects in every picture. LabelImg is a neat tool for labeling images, and its GitHub page has very clear instructions on how to install and employ it.

LabelImg GitHub link

LabelImg download link

Download and install LabelImg, point it to your \images\train directory, and then depict a box around each object in each image. Repeat the process for all the images in the \images\exam directory. This will take a while!

LabelImg saves a .xml file containing the label data for each prototype. These .xml files volition exist used to generate TFRecords, which are ane of the inputs to the TensorFlow trainer. Once yous take labeled and saved each image, there will be one .xml file for each paradigm in the \test and \train directories.

4. Generate Grooming Data

With the images labeled, information technology'southward fourth dimension to generate the TFRecords that serve as input data to the TensorFlow training model. This tutorial uses the xml_to_csv.py and generate_tfrecord.py scripts from Dat Tran's Raccoon Detector dataset, with some slight modifications to work with our directory structure.

First, the prototype .xml data will be used to create .csv files containing all the data for the train and test images. From the \object_detection folder, effect the post-obit command in the Anaconda command prompt:

(tensorflow1) C:\tensorflow1\models\research\object_detection> python xml_to_csv.py This creates a train_labels.csv and test_labels.csv file in the \object_detection\images folder.

Side by side, open the generate_tfrecord.py file in a text editor. Replace the label map starting at line 31 with your ain label map, where each object is assigned an ID number. This same number consignment will be used when configuring the labelmap.pbtxt file in Pace 5b.

For example, say you are training a classifier to detect basketballs, shirts, and shoes. You will replace the post-obit lawmaking in generate_tfrecord.py:

# TO-DO replace this with label map def class_text_to_int(row_label): if row_label == 'nine': render 1 elif row_label == 'ten': return 2 elif row_label == 'jack': return three elif row_label == 'queen': return 4 elif row_label == 'king': return 5 elif row_label == 'ace': return half dozen else: None With this:

# TO-DO replace this with characterization map def class_text_to_int(row_label): if row_label == 'basketball': render 1 elif row_label == 'shirt': return 2 elif row_label == 'shoe': return three else: None Then, generate the TFRecord files by issuing these commands from the \object_detection folder:

python generate_tfrecord.py --csv_input=images\train_labels.csv --image_dir=images\train --output_path=train.tape python generate_tfrecord.py --csv_input=images\test_labels.csv --image_dir=images\test --output_path=test.record These generate a train.record and a test.tape file in \object_detection. These volition be used to train the new object detection classifier.

five. Create Label Map and Configure Training

The last affair to do earlier grooming is to create a characterization map and edit the training configuration file.

5a. Characterization map

The characterization map tells the trainer what each object is by defining a mapping of class names to class ID numbers. Employ a text editor to create a new file and save it as labelmap.pbtxt in the C:\tensorflow1\models\research\object_detection\preparation folder. (Make sure the file blazon is .pbtxt, not .txt !) In the text editor, re-create or type in the label map in the format below (the example below is the label map for my Pinochle Deck Card Detector):

item { id: 1 name: '9' } item { id: 2 name: 'ten' } item { id: 3 name: 'jack' } item { id: iv proper noun: 'queen' } item { id: 5 name: 'king' } particular { id: six proper noun: 'ace' } The label map ID numbers should be the same as what is defined in the generate_tfrecord.py file. For the basketball, shirt, and shoe detector example mentioned in Step 4, the labelmap.pbtxt file will look like:

item { id: ane name: 'basketball game' } item { id: 2 name: 'shirt' } item { id: 3 name: 'shoe' } 5b. Configure training

Finally, the object detection preparation pipeline must be configured. It defines which model and what parameters volition exist used for training. This is the last step before running training!

Navigate to C:\tensorflow1\models\enquiry\object_detection\samples\configs and copy the faster_rcnn_inception_v2_pets.config file into the \object_detection\grooming directory. Then, open the file with a text editor. There are several changes to brand to the .config file, mainly changing the number of classes and examples, and adding the file paths to the training data.

Make the following changes to the faster_rcnn_inception_v2_pets.config file. Notation: The paths must be entered with single forrad slashes (Non backslashes), or TensorFlow will give a file path fault when trying to train the model! Also, the paths must be in double quotation marks ( " ), non single quotation marks ( ' ).

-

Line 9. Change num_classes to the number of different objects yous want the classifier to detect. For the higher up basketball, shirt, and shoe detector, it would be num_classes : 3 .

-

Line 106. Change fine_tune_checkpoint to:

- fine_tune_checkpoint : "C:/tensorflow1/models/research/object_detection/faster_rcnn_inception_v2_coco_2018_01_28/model.ckpt"

-

Lines 123 and 125. In the train_input_reader section, change input_path and label_map_path to:

- input_path : "C:/tensorflow1/models/research/object_detection/train.tape"

- label_map_path: "C:/tensorflow1/models/research/object_detection/grooming/labelmap.pbtxt"

-

Line 130. Change num_examples to the number of images you have in the \images\test directory.

-

Lines 135 and 137. In the eval_input_reader section, change input_path and label_map_path to:

- input_path : "C:/tensorflow1/models/research/object_detection/exam.record"

- label_map_path: "C:/tensorflow1/models/research/object_detection/training/labelmap.pbtxt"

Save the file afterwards the changes accept been made. That'southward it! The training job is all configured and set up to go!

6. Run the Grooming

UPDATE 9/26/eighteen: Equally of version i.9, TensorFlow has deprecated the "train.py" file and replaced it with "model_main.py" file. I haven't been able to become model_main.py to work correctly yet (I run in to errors related to pycocotools). Fortunately, the train.py file is still available in the /object_detection/legacy folder. Simply move train.py from /object_detection/legacy into the /object_detection folder and then continue following the steps below.

Here we become! From the \object_detection directory, effect the following command to begin grooming:

python train.py --logtostderr --train_dir=training/ --pipeline_config_path=preparation/faster_rcnn_inception_v2_pets.config If everything has been set up correctly, TensorFlow will initialize the preparation. The initialization can take up to 30 seconds earlier the actual preparation begins. When training begins, information technology will expect like this:

Each step of training reports the loss. Information technology will beginning high and get lower and lower as training progresses. For my grooming on the Faster-RCNN-Inception-V2 model, information technology started at about 3.0 and quickly dropped below 0.eight. I recommend assuasive your model to train until the loss consistently drops beneath 0.05, which will take about 40,000 steps, or nearly 2 hours (depending on how powerful your CPU and GPU are). Note: The loss numbers will exist unlike if a different model is used. MobileNet-SSD starts with a loss of well-nigh twenty, and should exist trained until the loss is consistently under two.

You can view the progress of the training job by using TensorBoard. To do this, open a new instance of Anaconda Prompt, activate the tensorflow1 virtual surround, alter to the C:\tensorflow1\models\inquiry\object_detection directory, and event the following command:

(tensorflow1) C:\tensorflow1\models\inquiry\object_detection>tensorboard --logdir=training This volition create a webpage on your local car at YourPCName:6006, which can be viewed through a web browser. The TensorBoard page provides information and graphs that show how the training is progressing. One important graph is the Loss graph, which shows the overall loss of the classifier over time.

The grooming routine periodically saves checkpoints about every five minutes. You tin terminate the preparation past pressing Ctrl+C while in the command prompt window. I typically look until but subsequently a checkpoint has been saved to stop the training. Y'all can terminate training and start information technology later, and it will restart from the last saved checkpoint. The checkpoint at the highest number of steps will be used to generate the frozen inference graph.

7. Export Inference Graph

Now that training is complete, the concluding pace is to generate the frozen inference graph (.atomic number 82 file). From the \object_detection binder, issue the following control, where "XXXX" in "model.ckpt-XXXX" should be replaced with the highest-numbered .ckpt file in the preparation folder:

python export_inference_graph.py --input_type image_tensor --pipeline_config_path training/faster_rcnn_inception_v2_pets.config --trained_checkpoint_prefix preparation/model.ckpt-XXXX --output_directory inference_graph This creates a frozen_inference_graph.lead file in the \object_detection\inference_graph folder. The .pb file contains the object detection classifier.

8. Utilize Your Newly Trained Object Detection Classifier!

The object detection classifier is all ready to become! I've written Python scripts to test it out on an image, video, or webcam feed.

Before running the Python scripts, you need to alter the NUM_CLASSES variable in the script to equal the number of classes you lot want to notice. (For my Pinochle Menu Detector, there are six cards I want to notice, and then NUM_CLASSES = six.)

To test your object detector, motion a picture of the object or objects into the \object_detection folder, and change the IMAGE_NAME variable in the Object_detection_image.py to match the file name of the picture. Alternatively, yous can utilise a video of the objects (using Object_detection_video.py), or just plug in a USB webcam and point it at the objects (using Object_detection_webcam.py).

To run whatever of the scripts, blazon "idle" in the Anaconda Command Prompt (with the "tensorflow1" virtual surround activated) and press ENTER. This will open IDLE, and from there, yous can open up any of the scripts and run them.

If everything is working properly, the object detector will initialize for about x seconds and then display a window showing any objects it's detected in the prototype!

If yous run across errors, please check out the Appendix: it has a list of errors that I ran in to while setting upwards my object detection classifier. You can as well trying Googling the error. At that place is usually useful information on Stack Substitution or in TensorFlow's Bug on GitHub.

Appendix: Common Errors

It appears that the TensorFlow Object Detection API was developed on a Linux-based operating organization, and most of the directions given by the documentation are for a Linux Os. Trying to become a Linux-developed software library to work on Windows can be challenging. At that place are many little snags that I ran in to while trying to set up tensorflow-gpu to railroad train an object detection classifier on Windows 10. This Appendix is a listing of errors I ran in to, and their resolutions.

ane. ModuleNotFoundError: No module named 'deployment' or No module named 'nets'

This error occurs when you try to run object_detection_tutorial.ipynb or train.py and you don't have the PATH and PYTHONPATH environment variables set correctly. Leave the virtual environment by closing and re-opening the Anaconda Prompt window. And so, issue "activate tensorflow1" to re-enter the environs, and then issue the commands given in Step 2e.

You tin use "echo %PATH%" and "echo %PYTHONPATH%" to check the surroundings variables and make sure they are gear up upwards correctly.

Likewise, make sure yous have run these commands from the \models\research directory:

setup.py build setup.py install two. ImportError: cannot import name 'preprocessor_pb2'

ImportError: cannot import proper noun 'string_int_label_map_pb2'

(or similar errors with other pb2 files)

This occurs when the protobuf files (in this case, preprocessor.proto) have not been compiled. Re-run the protoc command given in Step 2f. Cheque the \object_detection\protos folder to make certain there is a name_pb2.py file for every proper noun.proto file.

3. object_detection/protos/.proto: No such file or directory

This occurs when you try to run the

"protoc object_detection/protos/*.proto --python_out=." control given on the TensorFlow Object Detection API installation page. Pitiful, it doesn't piece of work on Windows! Copy and paste the full control given in Step 2f instead. There's probably a more svelte mode to exercise it, but I don't know what it is.

four. Unsuccessful TensorSliceReader constructor: Failed to get "file path" … The filename, directory name, or volume label syntax is incorrect.

This error occurs when the filepaths in the preparation configuration file (faster_rcnn_inception_v2_pets.config or similar) take not been entered with backslashes instead of forward slashes. Open the .config file and make certain all file paths are given in the following format:

five. ValueError: Tried to convert 't' to a tensor and failed. Error: Argument must be a dense tensor: range(0, three) - got shape [three], but wanted [].

The issue is with models/inquiry/object_detection/utils/learning_schedules.py Currently it is

rate_index = tf.reduce_max(tf.where(tf.greater_equal(global_step, boundaries), range(num_boundaries), [0] * num_boundaries)) Wrap list() effectually the range() like this:

rate_index = tf.reduce_max(tf.where(tf.greater_equal(global_step, boundaries), listing(range(num_boundaries)), [0] * num_boundaries)) Ref: Tensorflow Issue#3705

6. ImportError: DLL load failed: The specified procedure could non be found. (or other DLL-related errors)

This error occurs because the CUDA and cuDNN versions you have installed are not compatible with the version of TensorFlow you are using. The easiest way to resolve this error is to utilize Anaconda'south cudatoolkit package rather than manually installing CUDA and cuDNN. If you ran into these errors, try creating a new Anaconda virtual environment:

conda create -due north tensorflow2 pip python=3.v Then, once inside the surroundings, install TensorFlow using CONDA rather than PIP:

conda install tensorflow-gpu Then restart this guide from Pace 2 (just you tin can skip the part where you install TensorFlow in Step 2d).

7. In Step 2g, the Jupyter Notebook runs all the way through with no errors, merely no pictures are displayed at the end.

If you run the total Jupyter Notebook without getting any errors, simply the labeled pictures still don't announced, try this: go in to object_detection/utils/visualization_utils.py and comment out the import statements around lines 29 and 30 that include matplotlib. Then, attempt re-running the Jupyter notebook. (The visualization_utils.py script changes quite a bit, and so information technology might not be exactly line 29 and 30.)

How To Set Up An Appendix,

Source: https://github.com/EdjeElectronics/TensorFlow-Object-Detection-API-Tutorial-Train-Multiple-Objects-Windows-10

Posted by: wongthadespecte.blogspot.com

0 Response to "How To Set Up An Appendix"

Post a Comment